Contents

Introduction

Author: Movania Muhammad Mobeen

Hello readers, In this article, we will learn how to port the 5th tutorial on lighting calculation in the new OpenGL 3.3. We saw in the last tutorial how to load a textue mapped 3ds model in OpenGL 3.3 and above. With that knowledge in our hands, we can now explore on how to handle lighting on meshes. Since the first tutorial, we know that handling of geometry in OpenGL 3.0 and above requires the use of vertex buffer objects VBO along with their state management using the vertex array object (VAO). We already loaded the texture mapped 3ds mesh in the last tutorial so the geometry handling for this tutorial is exactly the same as the last tutorial. The only things changed in this tutorial is the lighting part. In fixed function OpenGL, the lighting is calculated per vertex. Hence, we would do exactly that in our vertex shader. While a lot of things are hidden from the user in the fixed function pipeline, with shaders everything is in your hand. You the programmer decide what you want to do.

Basic lighting concepts

Before proceeding forward, I would like to put some time to elaborate more on the basics of lighting in general. The lighting computation in computer graphics can take place in any coordinate space as long as all of the vectors (vertex normals, light vector, view vector) are in the same coordinate space. We can do lighting calculation in object space, world space, view space or even screen space. Whatever our choice is, we must make sure that all of the vectors used in the calculation are in the same space. We give the position of our light source. In our case, we are assuming a point light source which lights the whole sphere surrounding its position. The light position's fourth coordinate (if 1 signifies a point light source and if 0 signifies a directional light source). The reason for this has something to do with the vector maths. We know that a 4x4 matrix is conveniently divided into 4 sub parts as shown below:

+------+--+

|1 0 0 Tx | |1 0 0 |Tx|

|0 1 0 Ty | |0 1 0 |Ty|

|0 1 0 Tz | |0 0 1 |Tz|

|0 0 0 1 | +------+--+

|0 0 0 |1 |

+------+--+

The upper left 3x3 sub matrix is the orientation component. The right column vector is the translation component. If a matrix is multiplied by a vector whose fourth component (w) is 0, it essentially removes the translation component of the matrix and thus we are only left with the rotational component which represents the orientation. Hence, if the light position has the fourth component 0, it represents a directional light otherwise it represents a point light source.

Per vertex lighting using shaders

The OpenGL fixed function pipeline performs lighting calculation in eye space. The light position is given in eye space. Thus, we would need to tranform our normals and view vectors to eye space. We can get the eye space vertex positions by multiplying them with the modelview matrix (MV). For normal vectors, we cannot use the modelview matrix instead we must use the inverse transpose of the modelview matrix (also called normal matrix). Once we have the eye space vertex positions, we can calculate the light vector (L) as follows:

vec4 esVertex = MV*vec4(vVertex,1);

vec3 L = normalize(lightPos-esVeretx);

The normal vectors are then obtained as follows:

vec3 N = normalize(N*vNormal);

vec3 V = vec3(0,0,1);

The eye position in eye space is (0,0,0,1) and the eye is looking down the negative Z axis (0,0,-1) so the view vector becomes (0,0,1). Once we have the three vectors, we can apply the Gauraud shading model to calculate the light contribution as follows:

vec3 H = normalize(V+L);

vec4 A = mat_ambient*light_ambient;

float diffuse = max(dot(Nr,L),0.0);

float pf=0;

if (diffuse == 0.0)

{

pf = 0.0;

}

else

{

pf = max( pow(dot(Nr,H), mat_shininess), 0.);

}

vec4 S = light_specular*mat_specular* pf;

vec4 D = diffuse*mat_diffuse*light_diffuse;

color = A + D + S;

We calculate three components (ambient term (A), diffuse term (D) and specular term (S)). The ambient term is trivial. It just represents the constant background light contribution. If we have a global ambient terms, it must be mulitplied to our ambient term (A). We obtain the ambient contribution (A) by multiplying the ambient material component to the ambient light component. We obtain the diffuse contribution (D) by doing a dot product between the normal and the light vector. Then multiplying the dot product component to the diffuse component of material and light. We obtain the specular contribution (S) by doing a dot product between the normal and the halfway vector (H) between the view vector (V) and the light vector (L). This dot product is then raised to the power of the material shininess value. This gives us a specular highlight. The higher the shininess value, the sharper the highlight becomes. The total specular term is obtained by multiplying the specular dot product component to the specular component of material and light. The final light contribution is the sum of the ambient, diffuse and specular terms. We calculate the color value per vertex and store it into an attribute. This attribute is smoothly interpolated by the rasterizer and then input as an attribute into the fragment shader. The fragment shader then multiplies this color with the color from the texturemap as follows:

#version 330

smooth in vec2 vTexCoord;

smooth in vec4 color;

out vec4 vFragColor;

uniform sampler2D textureMap;

void main(void)

{

vFragColor = texture(textureMap, vTexCoord)*color;

}

Passing uniforms/attributes and rendering mesh

Like the previous tutorials, we load our shader first. Then we call the CreateAndLinkProgram() function and use the shader so that we may store the attribute and uniform locations as follows:

shader.LoadFromFile(GL_VERTEX_SHADER, "shader.vert");

shader.LoadFromFile(GL_FRAGMENT_SHADER, "shader.frag");

shader.CreateAndLinkProgram();

shader.Use();

shader.AddAttribute("vVertex");

shader.AddAttribute("vUV");

shader.AddAttribute("vNormal");

shader.AddUniform("N");

shader.AddUniform("MV");

shader.AddUniform("MVP");

shader.AddUniform("lightPos");

shader.AddUniform("mat_ambient");

shader.AddUniform("mat_diffuse");

shader.AddUniform("mat_specular");

shader.AddUniform("mat_shininess");

shader.AddUniform("light_ambient");

shader.AddUniform("light_diffuse");

shader.AddUniform("light_specular");

shader.AddUniform("textureMap");

shader.AddUniform("has_texture");

glUniform1i(shader("textureMap"),0);

glUniform4fv(shader("lightPos"),1, light_position);

glUniform4fv(shader("light_ambient"),1, light_ambient);

glUniform4fv(shader("light_diffuse"),1, light_diffuse);

glUniform4fv(shader("light_specular"),1, light_specular);

glUniform4fv(shader("mat_ambient"),1, mat_ambient);

glUniform4fv(shader("mat_diffuse"),1, mat_diffuse);

glUniform4fv(shader("mat_specular"),1, mat_specular);

glUniform1fv(shader("mat_shininess"),1, mat_shininess);

shader.UnUse();

In the render function, we first clear the framebuffer. Then we setup our matrices as follows:

//setup matrices glm::mat4 T = glm::translate(glm::mat4(1.0f),glm::vec3(0.0f, 0.0f, -20)); glm::mat4 Rx = glm::rotate(T, rotation_x, glm::vec3(1.0f, 0.0f, 0.0f)); glm::mat4 Ry = glm::rotate(Rx, rotation_y, glm::vec3(0.0f, 1.0f, 0.0f)); glm::mat4 MV = glm::rotate(Ry, rotation_z, glm::vec3(0.0f, 0.0f, 1.0f)); glm::mat3 N = glm::transpose(glm::inverse(glm::mat3(MV))); glm::mat4 MVP = P*MV;

Then we bind our vertex array, use our shader program, pass our uniforms (the normal matrix (N), the modelview matrix (MV) and the modelview projection matrix combined (MVP)) and finally issue our DrawElement call as before. Finally, we un use our shader, unbind our vao and swap the back buffer:

glBindVertexArray(vaoID);

shader.Use();

glUniformMatrix3fv(shader("N"), 1, GL_FALSE, glm::value_ptr(N));

glUniformMatrix4fv(shader("MV"), 1, GL_FALSE, glm::value_ptr(MV));

glUniformMatrix4fv(shader("MVP"), 1, GL_FALSE, glm::value_ptr(MVP));

glDrawElements(GL_TRIANGLES, object.polygons_qty*3, GL_UNSIGNED_SHORT, 0);

shader.UnUse();

glBindVertexArray(0);

glutSwapBuffers();

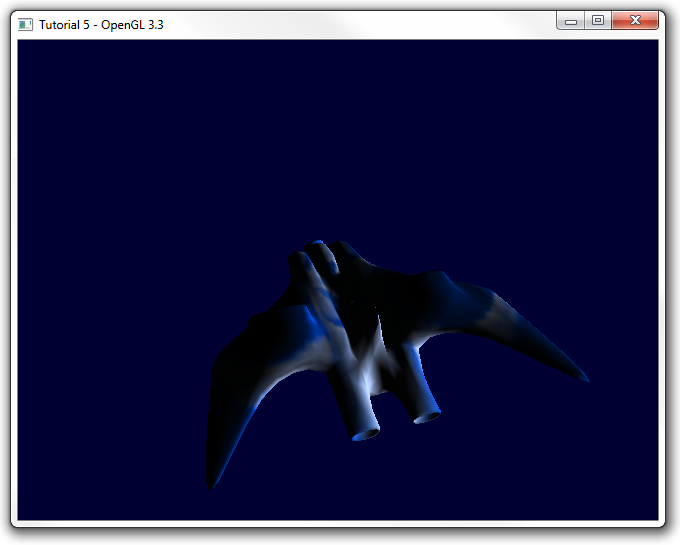

Note that the per vertex lighting shader presented here has not taken into consideration the attenuation of light since by default this contribution is off in fixed function pipeline. If however this component is large, we must reduce the light contributions by the attenuation amount. Thats it for per vertex lighting in OpenGL3.3. Running the code gives us the following output:

SOURCE CODE

The Source Code of this lesson can be downloaded from the Tutorials Main Page